After a thrilling 3 days at the Velocity Conference in London, I’ve put together this blog to give an overview of the more interesting talks covered in this year’s conference.

For those whom are unfamiliar with the Velocity conference, you can quite safely associate it with all things related to web performance.

Gone in 60 Frames Per Second

“Performance must be designed as a ‘feature’ of your application from the get-go”

This was a talk by Addy Osmani on a topic covering the magic ’60 frames per second’. In this opening talk, we covered the pitfalls to avoid when it comes to web performance and were presented with two case studies; the Daft Punk parallax website and Pinterest.

I would highly recommend anyone interested in web performance to check out the links above.

In addition to the case studies, we were also given a sneak preview to one of the new upcoming features in Chrome, the ability to use console.timeline() to allow developers to have greater control on how they capture performance data for their website/webapp.

Responsive Images, Techniques and Beyond

Yoav Weiss presented a talk about the various factors which come into play regarding responsive images. He was one of the very few speakers which made the further consideration for ‘art-direction’ as one of the ‘stakeholders’ of performance.

Yoav took us through the impact of something as simple as a drop shadow on page rendering performance and also commented on how the new IOS 7 UX direction being actually a good thing for web performance!

– SmashingMagazine article on the way forward for responsive images

High Performance Browser Networking

“The speed of light is not fast enough!”

This was one of my favourite talks of the event by Google web performance guru Ilya Grigorik.

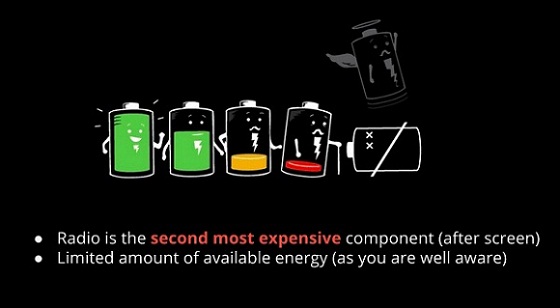

I found this talk very insightful, especially when diving into the impact Mobile web performance has on the device battery.

For example, things like the cost of radio operations by the mobile phone and/or waking up a device from a sleep state. Ilya also put his mythbuster hat on regarding 4G connections being the promised land.

Hands-on Web Performance Optimization Workshop

Talk by Andy Davies (Asteno) and Tobias Baldauf (Freelancer) who went through and did an interactive workshop which asked the audience to suggest websites to dive into and analysis it’s performance. This was the first of many shoutouts to an increasingly popular web performance analysis website WebPageTest.org. WebPageTest is an online tool which allows you to target specific browsers on devices and view it’s ‘waterfall‘.

It will allow you to do visual comparisons and also capture TCP dumps which can be useful if your website is seeing packet loss issues.

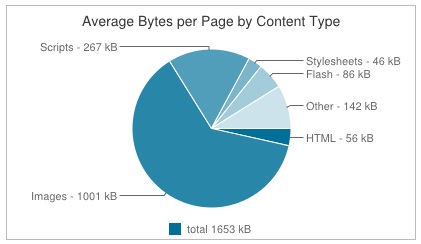

They ended by talking a little bit about HttpArchive.

HttpArchive (currently in beta) is an online resource which has archived thousands and thousands of website data ranging from trend analysis, thirdparty library adoption to webpage scores on Google PageSpeed.

Give Responsive Design a Mobile Performance Boost

“Slow is not cool… even less cool on mobile”

John Arne Saeteras from MobileTech presented a number of interesting case studies which aimed to quantify the impact of ‘good performance’.

Amazon found that increasing their website to be 100ms faster resulted in 1% increase in revenue, which comes to approximately $17 million.

Whilst I’m sure there were MANY other factors involved, there seems to be a lot of studies which discuss the psychology of web performance and how exceeding certain time thresholds GREATLY increases the potential customer bounce rate.

Fun facts

Loading www.apple.com a year ago would use 1.41% of your iPhone’s battery life. The average webpage is 1.6MB in size and makes 95 http requests.

“When all you have is a hammer, all problems look like a nail”

Responsive design != Mobile friendly

The BBC news website used to be a very simple and ‘plain’ webapp which users were quite happy with at the time. After they switched to their current ‘newer’ responsive design, opinion was quite split among the users. See for yourself!

Performance Testing in Continuous Integration

This talk was delivered by Michael Klepikov from Google focusing on good practice for embedded performance tracking as part of your development cycles.

Why is it hard?

The truth is, when it comes to web performance it is a domain of few experts. Not only this, but the maintenance effort and overhead is often higher – especially so when it comes to integrating this into your automated build in a reliable way.

The nature of the UI performance tests is that they will quickly ‘rot’ if no one notices, or worse still, if no one or any automated step is actually validating/comparing the data to previous benchmarks.

In complex web applications, in particular trading applications in the browser, it’s not simply just a case of measuring the load-time. The job isn’t to just load something within a magic threshold, it’s to maintain a good user experience for the duration of that user’s interaction with your application.

To have to fix performance issues in production is obviously far more expensive. So what’s the answer? What should we all be doing?

Aim to have your functional tests form the basis of your performance tests

Sounds simple enough, right?

Automate your performance tests to exercise real user scenarios in repetition. Use any available metric capturing tools for what you care about to then output this to log/csv files as artifacts of the performance test.

Once you have the above, you are perfectly placed to have your build system use these artifacts to do the grunt-work and generate the necessary analysis reports/graphs for your QA/team to validate and monitor on a regular basis.

Are Today’s Best Practices… Tomorrow’s Performance Anti-Patterns?

A very ‘out there’ title for a talk and definitely one of the most interesting of the entire event for me.

Andy Davies (Asteno) talked about Steve Sounders’ 14 rules for faster-loading websites, a great set guideline for any performance-minded web developers to consider.

HTTP 2.0 was another topic of discussion, which will be based on Google’s SPDY network protocol. It’s quite similar to HTTP 1.1 but focuses on goals of reducing web page load latency and improving web security. It has certain advantages over HTTP 1.1 in making use of a single connection for multiple resources but as of now, it also has issues with things like the performance of merging CSS.

Comparisons of the two as well as weighing up other ‘best practices’ of today’s web performance techniques can be found in the slides shared below. These include, lazy-loading, sprites, css-merging and dataURIs.

Andy also mentioned how some websites are also making use of in-line styling for above-the-fold webpage content simply to achieve a faster page render time, using ‘normal’ CSS/HTML and JS for the rest of the page.

DOM to Pixels – accelerating your rendering performance

This was the earmarked as the ‘headline act’ by Steve Sounders, a talk by Google’s Paul Lewis.

“Okay so you’ve loaded your page content quickly… you still have to then render it”

A lot of talks in the event had emphasis on page load-times and the waterfall of the page content. This is all very well and good but the browser still then needs to paint this to the screen.

How fast is fast, anyway?

In short, 100ms is a safe number to be considered fast when it comes to rendering the page. Given that most devices support a 60hz refresh rate, the frame-time that your application has to be ‘fast’ is calculated as approximately 16ms.

1000ms/60 = ~16.66ms

What can I animate on in my CSS and still have good performance?

- scale -> transform: scale(n)

- move -> transform: translateX(npx)

- rotation -> transform: rotate(ndeg)

- fade -> opacity: 0..1

Animating on anything else and you will struggle to hit 60fps, especially on mobile.

Paul went into a lot of detail on specifics; scheduling your visual updates, avoiding use of setTimeout() and setInterval() and being conscious of the impact of scrolling in your application with regards to screen painting.

To see the content in more detail, visit jankfree.org.

Summary

Lots of great talks. Even greater to have the spotlight on mobile web performance in particular.

The event was well run and had a strong turn out from the community. The tooling support for diving into performance is improving all the time so we can make better informed decisions about our web applications. I wasn’t able to include them all on this post, but there was a lot of great content delivered by all talks at this event, looking forward to seeing how far we’ve come in a year’s time.