There were two days of workshops ahead of the conference day, and a ‘Meetup’ on the Thursday evening, which meant attendance at the 6.30am run by the pier was limited to 10 of the several hundred QAs and testers in attendance.

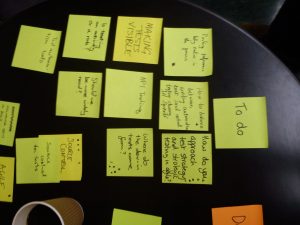

Lean Coffee

The conference day started at 8am with a Lean Coffee session. Our group chose ‘Recruiting good Software Developers in Test’ as the first topic to discuss. We agreed that it was difficult to attract good candidates, especially for permanent roles. It also became apparent that QA roles are almost split into two in many people’s minds. Split between more traditional manual QAs, and the SDET role. It is difficult to find good SDETs who also have the ‘testing mindset’. Not least because testing is not often taught to graduates in their Computer Science courses. Something that had also been reported by a recent graduate who started at Caplin. One delegate did report that at Sheffield and Leeds universities they were now teaching more about testing, but that this was a recent development.

The conference proper started about 9.15am with a great talk on performance testing. This was the first of 10 talks throughout the day. See Testbash 3 talks for the full list. The ones that stood out for me are highlighted below:

Managing Application Performance Throughout the LifeCycle – Scott Barber

Scott’s was an entertaining start to the day, once he had ensured that we were ‘fully caffeinated’. He used an example of capacity planning that didn’t take into account the ‘unknown audience’ as well as the ‘known audience’ for a fashion webcast to highlight the long term affects that bad performance can have. The company in question withdrew from the fashion market to concentrate on it’s core business ever since. The effect of bad performance is still felt years later.

He then explained his T4 model (Target; Test; Trend; Tune), and advised that it be applied not just to the application as a whole, but also to each component within the application, the infrastructure, and even down to the unit level if necessary. He recommended that the T4 process model could be iterated on at all these levels. And also at any point in the process. Final performance won’t be known until the end, but that doesn’t stop applying the T4 model to units or components earlier in the process.

Helping the new tester to get a running start – Joep Schuurkes

Joep compared the help we normally give to a new tester on a project to giving someone new to the city a map of Rotterdam, and then expecting them to be able to navigate the city like a taxi driver. He had even worked on projects where when the new person started the rest of the team were on holiday for 2 weeks, so they were just left a link to the application and asked to get on with testing. The new person did, but was then questioned why they did not test a feature hidden behind a small button at the bottom right of the screen, as that was the most important functionality? The bit that the users wanted most.

Joep recommended pair testing, and a structured introduction to the application under test, and also the methods that the team used. Good setup and process documentation could also be useful. Although a later speaker pointed out that ‘documents are where information goes to die’. A balance is needed, and a team wiki hub, and a team Skype can also help to onboard a new team member effectively.

Proper onboarding empowers people for success, rather than throwing them in the deep end and hoping for the best.

Inspiring Testers – Stephen Blower

Stephen was a new speaker who had taken part in the 99 second talks that end the conference the year before. At his own admission, he had been a ‘factory tester’, turning up and pushing buttons, and not taking that much interest in the process. He had then discovered Agile testing, and rapid testing, and developed a passion for testing that he did not know he previously possessed.

99 second talks

Anyone could sign up for these during the day, and a large group lined up to take their turn to speak. There were many speakers (and one mime), but the last made the most telling points. She talked about how important having the appropriate testing skills were to the testing role enabling the delivery of quality software on time. She highlighted how testers describe their skills: attention to detail, planning, diligent, thoughtful, reflective, intuitive, critical, good communication skills, breaks things, good at finding bugs, etc.

Her point was if these are the best words testers can find to describe their own skills, then why would anyone else think that a testers job involves anything more than just doing those things? Her challenge to the conference was to find a better vocabulary to describe the skills that testers possess and use.

This last point almost brought us full circle, to the lean coffee conversation of the morning. It may be harder to find a good SDET, but it is easier to describe the skills that they require, apart from the ‘testing mindset’.

Thanks for the write up Mike. 🙂