Coverage identifies the parts of the codebase that do not have tests.

Why do we need it?

Five questions often arise in software development and test automation:

- Can I refactor my code quickly and safely?

- Is my application robust? Will it break under certain conditions?

- Are there enough behavioural tests for the application we’ve built?

- Are my tests actually testing anything useful?

- Do my behavioural tests address the business requirements?

How does it help?

Coverage can play a valuable role in helping to address the questions asked above:

- Can I refactor my code quickly and safely? Knowing that we have sufficient unit test code coverage (at branch level) supports a ‘fail fast’ way of working and helps to document the expected technical behaviour of our units, which help Devs refactor quickly and safely.

- Is my application robust? Maintaining good levels of unit test coverage give us confidence that there are few lines of code that are untested, so we know that the app is unlikely to fail with a code exception.

- Are there enough behavioural tests? Code coverage against behavioural tests (expressed in business terms) gives us confidence that we’ve met the customer’s requirements more accurately. Sufficient coverage at this level reduces the likelihood of bugs being found later in the cycle in exploratory testing which can be costly and time-consuming.

- Are my tests actually testing anything useful? In support of code coverage, mutation tests measure the integrity of the unit and behavioural tests, thus giving us confidence that the tests are enforcing behaviour as opposed to just running code.

- Do my behavioural tests address the business requirements? The final question is a tricky one, but a high-level testability matrix can be developed to cross reference the key business use cases against suites of tests. Additionally, behavioural tests should be written in a style (with reports) that QAs and POs can review.

Usage

How we implement coverage is important. We should avoid striving for an ideal target coverage, but instead aim to prevent reductions in coverage and focus on areas of patently insufficient coverage. The coverage metrics should be used as an invitation for a discussion, perhaps at the PR stage, rather than a weapon of policing quality. Generally it’s best to let the engineering team decide when and how to use it.

Tools

Some examples covering Caplin’s tech stack:

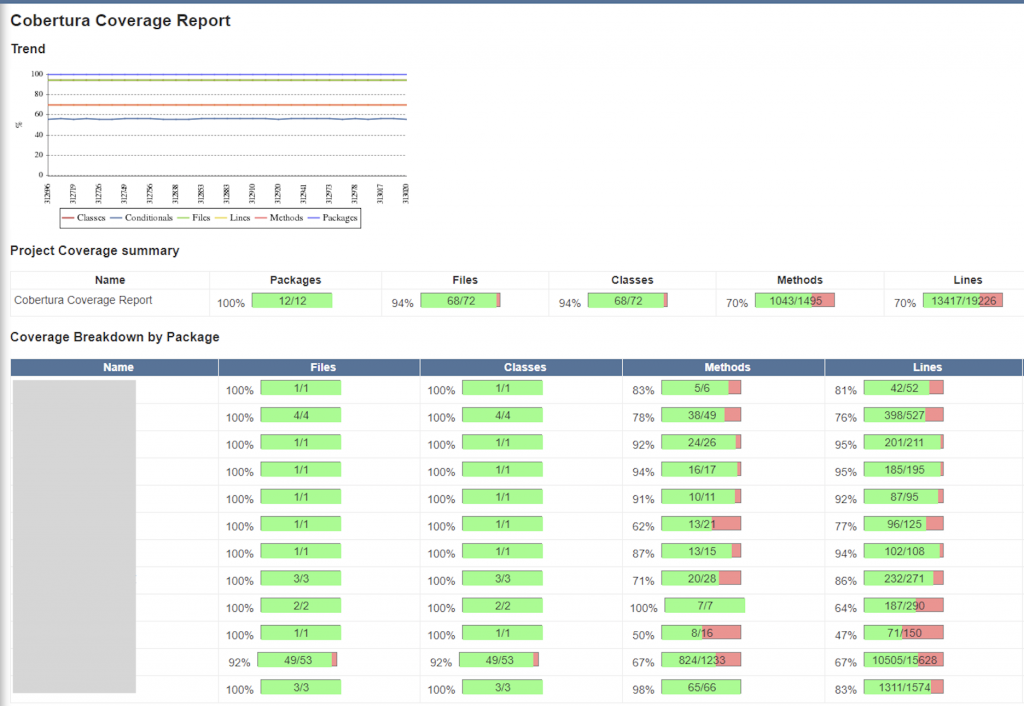

- Jacoco for Java

- Istanbul for JavaScript (run via Karma)

- LCOV front-end to GCC’s gcov, reformatted in Cobertura format for display by Jenkins.